OAK vs RealSense™

Depth accuracy comparison

Comparison overview

| Specification | OAK-D Pro / -W | OAK-D Lite | OAK ToF | D415 | D435 | D455 |

|---|---|---|---|---|---|---|

| RGB | IMX378 | IMX214 | IMX378 | OV2740 | OV2740 | OV9782 |

| RGB HFOV | 66˚ / 109˚ | 69˚ | 66˚ | 69˚ | 69˚ | 90˚ |

| RGB Shutter | Rolling / Global | Rolling | Rolling | Rolling | Rolling | Global |

| RGB resolution | 12MP | 13MP | 12MP | 2MP | 2MP | 1MP |

| Depth Type | Active Stereo | Passive Stereo | ToF | Active Stereo | Active Stereo | Active Stereo |

| Depth sensor | OV9282 | OV7251 | 33D ToF | OV2740 | OV9282 | OV9782 |

| Stereo Shutter | Global | Global | / | Global | Global | Global |

| Stereo baseline | 75mm | 75mm | / | 55mm | 55mm | 95mm |

| Depth HFOV | 72˚ / 127˚ | 72˚ | 70˚ | 65˚ | 87˚ | 87˚ |

| Min Depth | 20 cm | 20 cm | 20 cm | 45 cm | 28 cm | 52 cm |

| Depth resolution | 1280x800 | 640x480 | 1280x800 | 1024x768 | 1280x720 | 1280x720 |

| IR LED | ✔️ | - | ✔️ | - | - | - |

| ToF | - | - | ✔️ | - | - | - |

| IMU | ✔️ | - | ✔️ | - | ✔️ | ✔️ |

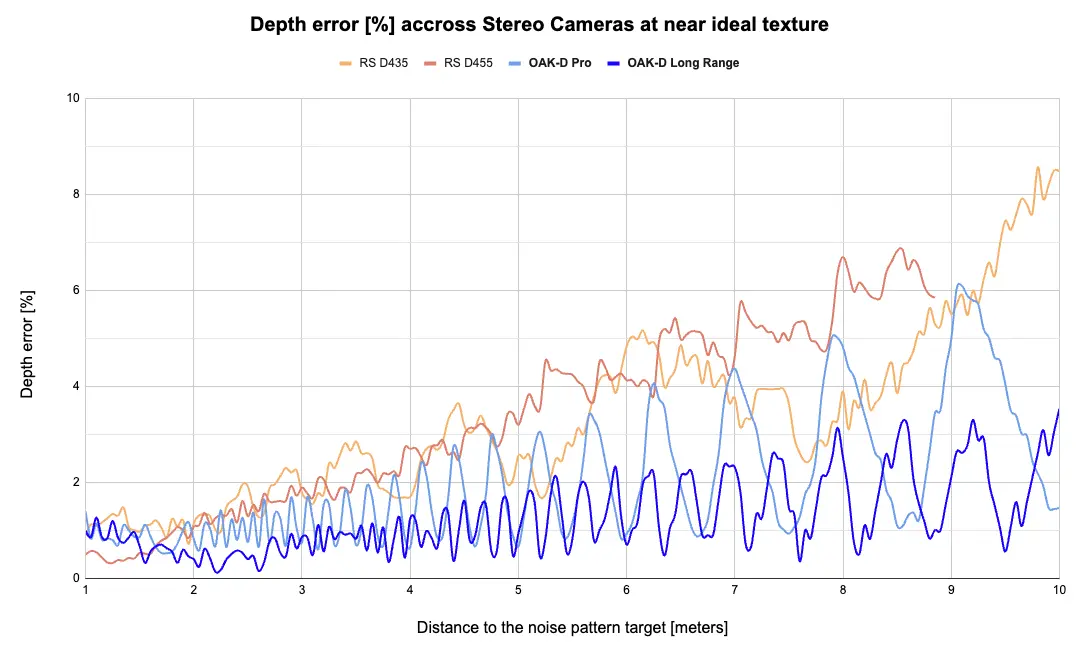

3rd party evaluation

Passive stereo (no IR dot projector)

Active stereo (IR dot projector)

On-device feature comparison

| On-Device Capabilities | OAK-D Pro | OAK-D Lite | D415 | D430-D435 | D450-D455 | F450 |

|---|---|---|---|---|---|---|

| Custom AI models | ✔️ | ✔️ | - | - | - | - |

| Object detection | ✔️ | ✔️ | - | - | - | - |

| Object tracking | ✔️ | ✔️ | - | - | - | - |

| On-device scripting | ✔️ | ✔️ | - | - | - | - |

| Video/Image Encoding | ✔️ | ✔️ | - | - | - | - |

| Image Manipulation | ✔️ | ✔️ | - | - | - | - |

| Skeleton/Hand Tracking | ✔️ | ✔️ | ✔️ | ✔️ | ✔️ | - |

| Feature Tracking | ✔️ | ✔️ | - | - | - | - |

| OCR | ✔️ | ✔️ | - | - | - | - |

| Face Recognition | ✔️ | ✔️ | - | - | - | ✔️ |

Features described

- Custom AI models - You can run any AI/NN model(s) on the device, as long as all layers are supported. You can also choose from 200+ pretrained NN models from Open Model Zoo and DepthAI Model Zoo.

- Object detection - Most popular object detectors have been converted and run on our devices. DepthAI supports onboard decoding of Yolo and MobileNet based NN models.

- Object tracking - ObjectTracker node comes with 4 tracker types, and it also supports tracking of objects in 3D space.

- On-device scripting - Script node enables users to run custom Python 3.9 scripts that will run on the device, used for managing the flow of the pipeline (business logic).

- Video/Image encoding - VideoEncoder node allows encoding into MJPEG, H265, or H264 formats.

- Image Manipulation - ImageManip node allows users to resize, warp, crop, flip, and thumbnail image frames and do type conversions (YUV420, NV12, RGB, etc.)

- Skeleton/Hand Tracking - Detect and track key points of a hand or human pose. Geaxgx's demos: Hand tracker, Blazepose, Movenet.

- 3D Semantic segmentation - Perceive the world with semantically-labeled pixels. DeeplabV3 demo here.

- 3D Object Pose Estimation - MediaPipe's Objectron has been converted to run on OAK cameras. Video here.

- 3D Edge Detection - EdgeDetector node uses Sobel filter to detect edges. With depth information, you can get physical position of these edges.

- Feature Tracking - FeatureTracker node detects and tracks key points (features).

- 3D Feature Tracking - With depth information, you can track these features in physical space.

- OCR - Optical character recognition, demo here.

- Face Recognition - Demo here, which runs face detection, alignment, and face recognition (3 different NN models) on the device simultaneously.

Modular design