Key capabilities

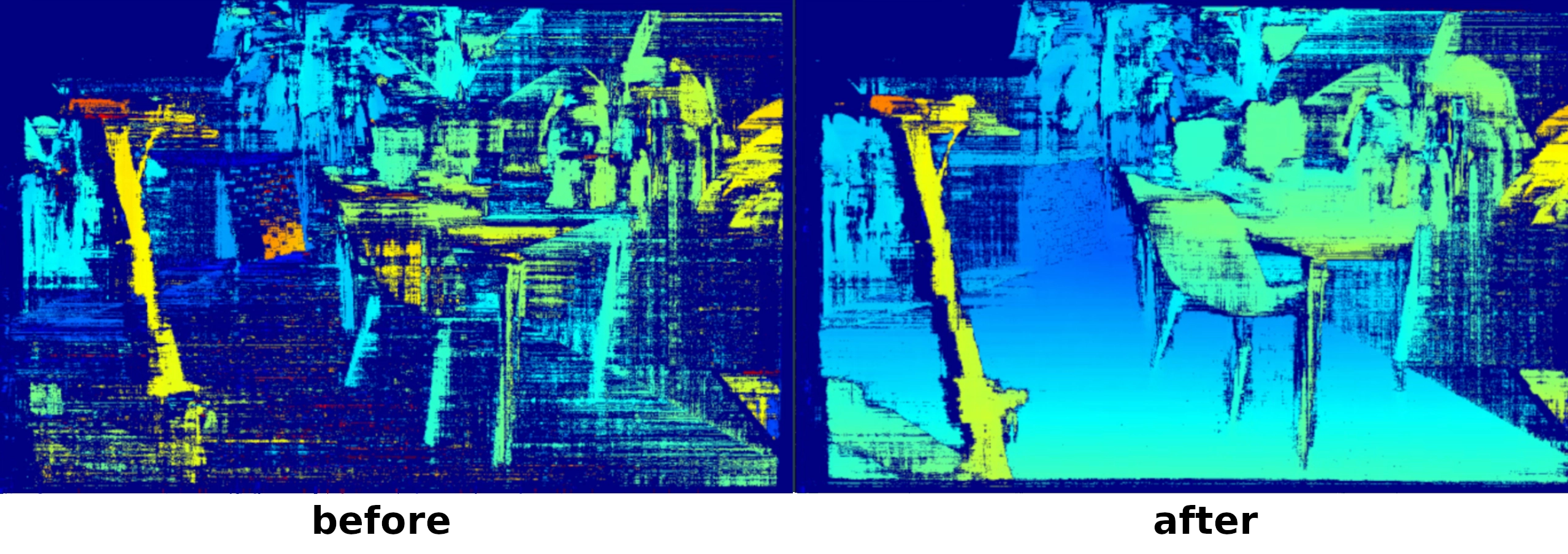

- Restore depth performance—brings the disparity map back to optimal visual quality.

- No targets required—operate in natural scenes; just move the camera to capture a varied view.

- Rapid execution—typically completes in seconds.

- Health monitoring—run diagnostics at any time without flashing new calibration.

Automatic calibration options

DynamicCalibration:- Use the AutoCalibration Host Node for explicit node-level control inside your pipeline.

- Use

DEPTHAI_AUTOCALIBRATIONfor deployment-time enablement without changing pipeline code. - Using direct setter of

Pipeline.setAutoCalibrationMode()to enable automatic calibration without using the AutoCalibration Host Node.

From

DepthAI 3.6, automatic calibration is enabled by default in ON_START mode. Use OFF to disable it.If your pipeline already includes a DynamicCalibration node or an AutoCalibration node, AutoCalibrationMode is not applied.Usage of the Dynamic Calibration Library (DCL)

DynamicCalibration node in DepthAI for dynamic calibration workflows.Dynamic calibration takes as input a DynamicCalibrationControl message with a command (eg. ApplyCalibration, Calibrate, ... see all available commands in the message definition). The node returns an output in one of the three output queues:calibrationOutputwith message type DynamicCalibrationResult,qualityOutputwith message type CalibrationQualitycoverageOutputwith message type CoverageDatametricsOutputwith message type CalibrationMetrics

Initializing the DynamicCalibration Node

DynamicCalibration node requires two synchronized camera streams from the same device. Here's how to set it up:Python

1import depthai as dai

2

3# initialize the pipeline

4pipeline = dai.Pipeline()

5

6# Create camera nodes

7cam_left = pipeline.create(dai.node.Camera).build(dai.CameraBoardSocket.CAM_B)

8cam_right = pipeline.create(dai.node.Camera).build(dai.CameraBoardSocket.CAM_C)

9

10# Request full resolution NV12 outputs

11left_out = cam_left.requestFullResolutionOutput()

12right_out = cam_right.requestFullResolutionOutput()

13

14# Initialize the DynamicCalibration node

15dyn_calib = pipeline.create(dai.node.DynamicCalibration)

16

17# Link the cameras to the DynamicCalibration

18left_out.link(dyn_calib.left)

19right_out.link(dyn_calib.right)

20

21device = pipeline.getDefaultDevice()

22calibration = device.readCalibration()

23device.setCalibration(calibration)

24

25pipeline.start()

26while pipeline.isRunning():

27 ...Sending Commands to the Node

Python

1# Initialize the command input queue

2command_input = dyn_calib.inputControl.createInputQueue()

3# Example command of sending a

4command_input.send(dai.DynamicCalibrationControl.startCalibration())StartCalibration()- Starts the calibration process.StopCalibration()- Stops the calibration process.Calibrate(force=False)- Computes a new calibration based on the loaded data.- force - no restriction on loaded data

CalibrationQuality(force=False)- Evaluates the quality of the current calibration.- force - no restriction on loaded data

LoadImage()- Load one image from the device.ComputeCalibrationMetrics(calibration)- Compute calibration metrics as dataQuality and calibrationConfidence.ApplyCalibration(calibration)- Apply calibration to the device.SetPerformanceMode(performanceMode)- Send performance mode which will be used.ResetData()- Remove all previously loaded data.

Receiving Data from the Node

coverageOutput→ coverage statistics (CoverageData)calibrationOutput→ calibration results (DynamicCalibrationResult)qualityOutput→ calibration quality check (CalibrationQuality)metricsOutput→ calibration statistics and data quality (CalibrationMetrics)

Python

1# queue for recieving new calibration

2calibration_output = dyn_calib.calibrationOutput.createOutputQueue()

3# queue for revieving the coverage

4coverage_output = dyn_calib.coverageOutput.createOutputQueue()

5# queue for checking the calibration quality

6quality_output = dyn_calib.qualityOutput.createOutputQueue()

7# queue for checking the calibration metrics

8metrics_output = dyn_calib.metricsOutput.createOutputQueue()DynamicCalibrationResult, CoverageData, CalibrationQuality and CalibrationMetrics to see exact data structures of the outputs.Reading Coverage Data

coverageOutput when an image is loaded either manually (with LoadImage command) or during continuous calibration (after running StartCalibration command).Manual Image LoadPython

1# Load a single image

2command_input.send(dai.DynamicCalibrationControl.loadImage())

3

4# Get coverage after loading

5coverage = coverage_output.get()

6print(f"Coverage = {coverage.meanCoverage}")Python

1command_input.send(dai.DynamicCalibrationControl.startCalibration())

2

3while pipeline.isRunning():

4 # Blocking read

5 coverage = coverage_output.get()

6 print(f"Coverage = {coverage.meanCoverage}")

7

8 # Non-blocking read

9 coverage = coverage_output.tryGet()

10 if coverage:

11 print(f"Coverage = {coverage.meanCoverage}")Reading Calibration Data

dai.DynamicCalibrationControl.startCalibration()— starts collecting data and attempts calibration.dai.DynamicCalibrationControl.calibrate(force=False)— calibrates with existing loaded data (image must be loaded beforehand withLoadImagecommand as can be seen in the example below).

Python

1# Load a single image

2command_inputsend.(dai.DynamicCalibrationControl.loadImage())

3

4# Send a command to calibrate

5command_input.send(dai.DynamicCalibrationControl.calibrate(force=False))

6

7# Get calibration after loading

8calibration = calibration_output.get()

9print(f"Calibration = {calibration.info}")Python

1# Starts collecting data and attempts calibration

2command_input.send(dai.DynamicCalibrationControl.startCalibration())

3

4while pipeline.isRunning():

5 # Blocking read

6 calibration = calibration_output.get()

7 print(f"Calibration = {calibration.info}")

8

9 # Non-blocking read

10 calibration = calibration_output.tryGet()

11 if calibration:

12 print(f"Calibration = {calibration.info}")Performance Modes

Python

1# Set performance mode

2dynCalibInputControl.send(dai.DynamicCalibrationControl.setPerformanceMode(dai.node.DynamicCalibration.OPTIMIZE_PERFORMANCE))Python

1dai.node.DynamicCalibration.PerformanceMode.OPTIMIZE_PERFORMANCE # The most strict mode

2dai.node.DynamicCalibration.PerformanceMode.DEFAULT # Less strict but mostly sufficient

3dai.node.DynamicCalibration.PerformanceMode.OPTIMIZE_SPEED # Optimize speed over performance

4dai.node.DynamicCalibration.PerformanceMode.STATIC_SCENERY # Not strict

5dai.node.DynamicCalibration.PerformanceMode.SKIP_CHECKS # Skip all internal checksExamples

Dynamic Calibration Interactive Visualizer

With the following commands you can clone and run the calibration integration.

Command Line

1git clone https://github.com/luxonis/depthai-core.git

2cd depthai-core/

3python3 -m venv venv

4source venv/bin/activate

5python3 examples/python/install_requirements.py

6python3 examples/python/DynamicCalibration/calibration_integration.pyDynamic Calibration will not fully restore the factory-specified absolute depth accuracy. Use factory tools for production-grade re-calibration.

Scenery guidelines

- Include textured objects at multiple depths.

- Avoid blank walls or featureless surfaces.

- Slowly move the camera to cover the full FOV; avoid sudden motions.

| Recommendation | Original Image VS. Feature Coverage (in green) |

|---|---|

| ✅Ensure rich texture and visual detail - rich textures, edges, and objects evenly distributed across FOV create ideal calibration conditions |  |

| 🚫Avoid flat or featureless surfaces - lack of textured surfaces or visually distinct objects provide little usable features |  |

| 🚫Avoid reflective and transparent surfaces - reflective and transparent surfaces create false 3D features |  |

| 🚫Avoid dark scenes - low contrast, shadows, and poorly lit scenes yield few detectable features |  |

| 🚫Avoid repetitive patterns - many patterned regions look too similar to tell apart |  |

PerformanceMode tuning

| Mode | When to use |

|---|---|

DEFAULT | Balanced accuracy vs. speed. |

STATIC_SCENERY | Camera is fixed, scene stable. |

OPTIMIZE_SPEED | Fastest calibration, reduced precision. |

OPTIMIZE_PERFORMANCE | Maximum precision in feature-rich scenes. |

SKIP_CHECKS | Automated pipelines, were internal check to guarantee scene quality are ignored. |

For highest accuracy, combine OPTIMIZE_PERFORMANCE with a dynamic, well-featured environment.

Limitations & notes

- Supported devices — Dynamic Calibration is available for:

- All stereo OAK Series 2 cameras (excluding FFC)

- All stereo OAK Series 4 cameras

- DepthAI version — requires DepthAI 3.0 or later.

- Re-calibrated parameters — updates extrinsics only; intrinsics remain unchanged.

- OS support — Available on Linux, MacOS and Windows.

- Absolute depth spec — DCL improves relative depth perception; absolute accuracy may still differ slightly from the original factory spec.

Troubleshooting

| Symptom | Possible cause | Fix |

|---|---|---|

| High reprojection error | Incorrect model name or HFOV in board config | Verify board JSON and camera specs |

| Depth still incorrect after “successful” DCL | Left / right cameras swapped | Swap sockets or update board config and recalibrate |

nullopt quality report | Insufficient scene coverage | Move camera to capture richer textures |

Runtime error: "The calibration on the device is too old to perform DynamicCalibration, full re-calibration required!" | The device calibration is too outdated for dynamic recalibration to provide any benefit. | A newer device is needed |

See also

- General Dynamic Calibration information page

- Manual stereo and ToF calibration guide

- If you'd like automatic calibration operation, have a look at the AutoCalibration documentation page.