OAK4 OS version

If you're using OAK4 cameras, make sure to use the latest version of Luxonis OS (1.6 or newer), otherwise ROI won't be mapped correctly.

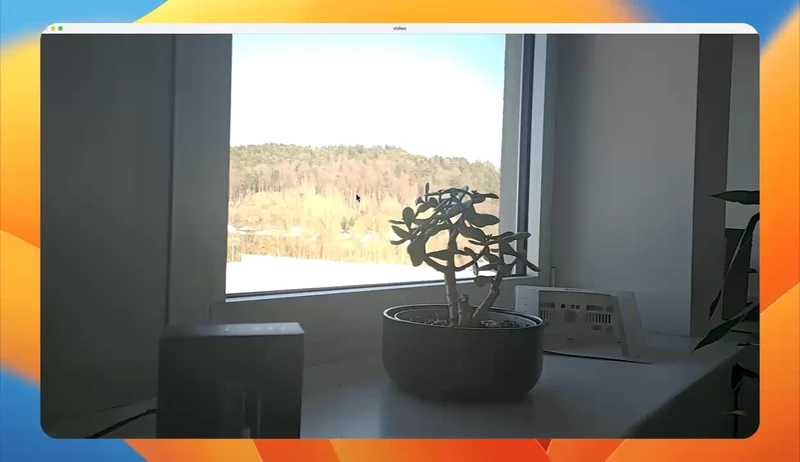

Demo

Pipeline

Source code

Python

PythonGitHub

1#!/usr/bin/env python3

2

3import cv2

4import depthai as dai

5

6# Create pipeline

7with dai.Pipeline() as pipeline:

8 # Define source and output

9 cam = pipeline.create(dai.node.Camera).build(dai.CameraBoardSocket.CAM_A)

10 cam_input_q = cam.inputControl.createInputQueue()

11 stream_q = cam.requestOutput((1920, 1080)).createOutputQueue()

12

13 cam_q_in = cam.inputControl.createInputQueue()

14

15 # Connect to device and start pipeline

16 pipeline.start()

17

18 # ROI selection variables

19 start_points = []

20 roi_rect = None

21 scale_factors = None

22 # Mouse callback function for ROI selection

23 def select_roi(event, x, y, flags, param):

24 global start_points, roi_rect

25 def set_roi_rect():

26 global roi_rect

27 x1, y1 = start_points

28 x2, y2 = (x, y)

29 roi_rect = (min(x1, x2), min(y1, y2), abs(x2-x1), abs(y2-y1))

30

31 if event == cv2.EVENT_LBUTTONDOWN:

32 roi_rect = None

33 start_points = (x, y)

34 elif event == cv2.EVENT_MOUSEMOVE and start_points:

35 set_roi_rect()

36 elif event == cv2.EVENT_LBUTTONUP and start_points:

37 set_roi_rect()

38 roi_rect_scaled = (

39 int(roi_rect[0] * scale_factors[0]),

40 int(roi_rect[1] * scale_factors[1]),

41 int(roi_rect[2] * scale_factors[0]),

42 int(roi_rect[3] * scale_factors[1])

43 )

44 print(f"ROI selected: {roi_rect}")

45 ctrl = dai.CameraControl()

46 print(f"Scaled ROI selected: {roi_rect_scaled}. Setting exposure and focus to this region.")

47 ctrl.setAutoExposureRegion(*roi_rect_scaled)

48 ctrl.setAutoFocusRegion(*roi_rect_scaled)

49 cam_q_in.send(ctrl)

50 start_points = None

51

52 # Create a window and set the mouse callback

53 cv2.namedWindow("video")

54 cv2.setMouseCallback("video", select_roi)

55

56 while pipeline.isRunning():

57 img_hd: dai.ImgFrame = stream_q.get()

58 if scale_factors is None:

59 print(img_hd.getTransformation().getSourceSize(), img_hd.getTransformation().getSize())

60 scale_factors = (img_hd.getTransformation().getSourceSize()[0] / img_hd.getTransformation().getSize()[0],

61 img_hd.getTransformation().getSourceSize()[1] / img_hd.getTransformation().getSize()[1])

62 frame = img_hd.getCvFrame()

63

64 # Draw the ROI rectangle if it exists

65 if roi_rect is not None:

66 x, y, w, h = roi_rect

67 cv2.rectangle(frame, (x, y), (x+w, y+h), (0, 255, 0), 2)

68

69 cv2.imshow("video", frame)

70

71 key = cv2.waitKey(1)

72 if key == ord("q"):

73 breakNeed assistance?

Head over to Discussion Forum for technical support or any other questions you might have.