Demo

Pipeline

Source code

Python

PythonGitHub

1#!/usr/bin/env python3

2import cv2

3import depthai as dai

4import numpy as np

5from pathlib import Path

6

7# Get the absolute path of the current script's directory

8script_dir = Path(__file__).resolve().parent

9examplesRoot = (script_dir / Path('../')).resolve() # This resolves the parent directory correctly

10models = examplesRoot / 'models'

11tagImage = models / 'lenna.png'

12

13# Decode the image using OpenCV

14lenaImage = cv2.imread(str(tagImage.resolve()))

15lenaImage = cv2.resize(lenaImage, (256, 256))

16lenaImage = np.array(lenaImage)

17

18device = dai.Device()

19platform = device.getPlatform()

20if(platform == dai.Platform.RVC2):

21 daiType = dai.ImgFrame.Type.RGB888p

22elif(platform == dai.Platform.RVC4):

23 daiType = dai.ImgFrame.Type.RGB888i

24else:

25 raise RuntimeError("Platform not supported")

26

27daiLenaImage = dai.ImgFrame()

28

29daiLenaImage.setCvFrame(lenaImage, daiType)

30

31with dai.Pipeline(device) as pipeline:

32 model = dai.NNModelDescription("depthai-test-models/simple-concatenate-model")

33 model.platform = platform.name

34

35 nnArchive = dai.NNArchive(dai.getModelFromZoo(model))

36 cam = pipeline.create(dai.node.Camera).build()

37 camOut = cam.requestOutput((256,256), daiType)

38

39 neuralNetwork = pipeline.create(dai.node.NeuralNetwork)

40 neuralNetwork.setNNArchive(nnArchive)

41 camOut.link(neuralNetwork.inputs["image1"])

42 lennaInputQueue = neuralNetwork.inputs["image2"].createInputQueue()

43 # No need to send the second image everytime

44 neuralNetwork.inputs["image2"].setReusePreviousMessage(True)

45 qNNData = neuralNetwork.out.createOutputQueue()

46 pipeline.start()

47 lennaInputQueue.send(daiLenaImage)

48 while pipeline.isRunning():

49 inNNData: dai.NNData = qNNData.get()

50 tensor : np.ndarray = inNNData.getFirstTensor()

51 # Drop the first dimension

52 tensor = tensor.squeeze().astype(np.uint8)

53 # Check the shape - in case 3 is not the last dimension, permute it to the last

54 if tensor.shape[0] == 3:

55 tensor = np.transpose(tensor, (1, 2, 0))

56 print(tensor.shape)

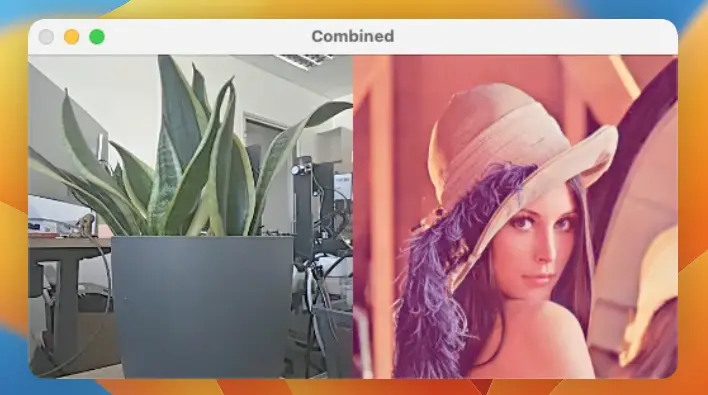

57 cv2.imshow("Combined", tensor)

58 key = cv2.waitKey(1)

59 if key == ord('q'):

60 breakNeed assistance?

Head over to Discussion Forum for technical support or any other questions you might have.