Training

Training Tutorials

Training Notebooks

Classification

Image classification is the task of assigning a predefined label or category to an entire input image.

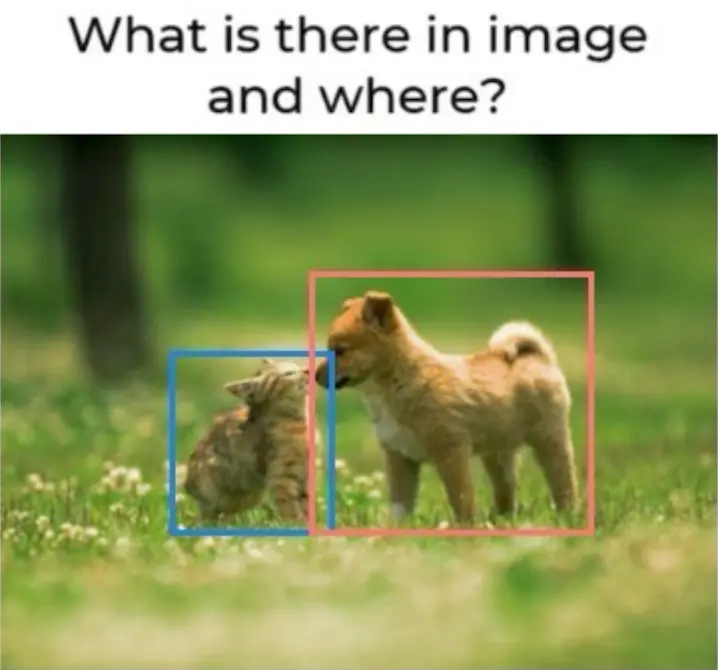

Object detection

Object detection involves identifying and locating multiple objects within an image and providing bounding boxes around them.

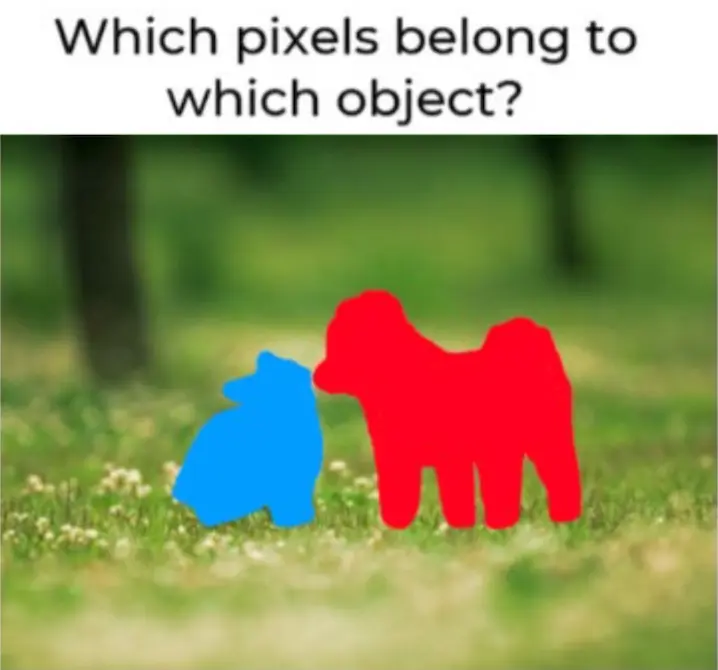

Semantic segmentation

Semantic segmentation aims to classify each pixel in an image into specific classes or categories, effectively assigning a label to every pixel.