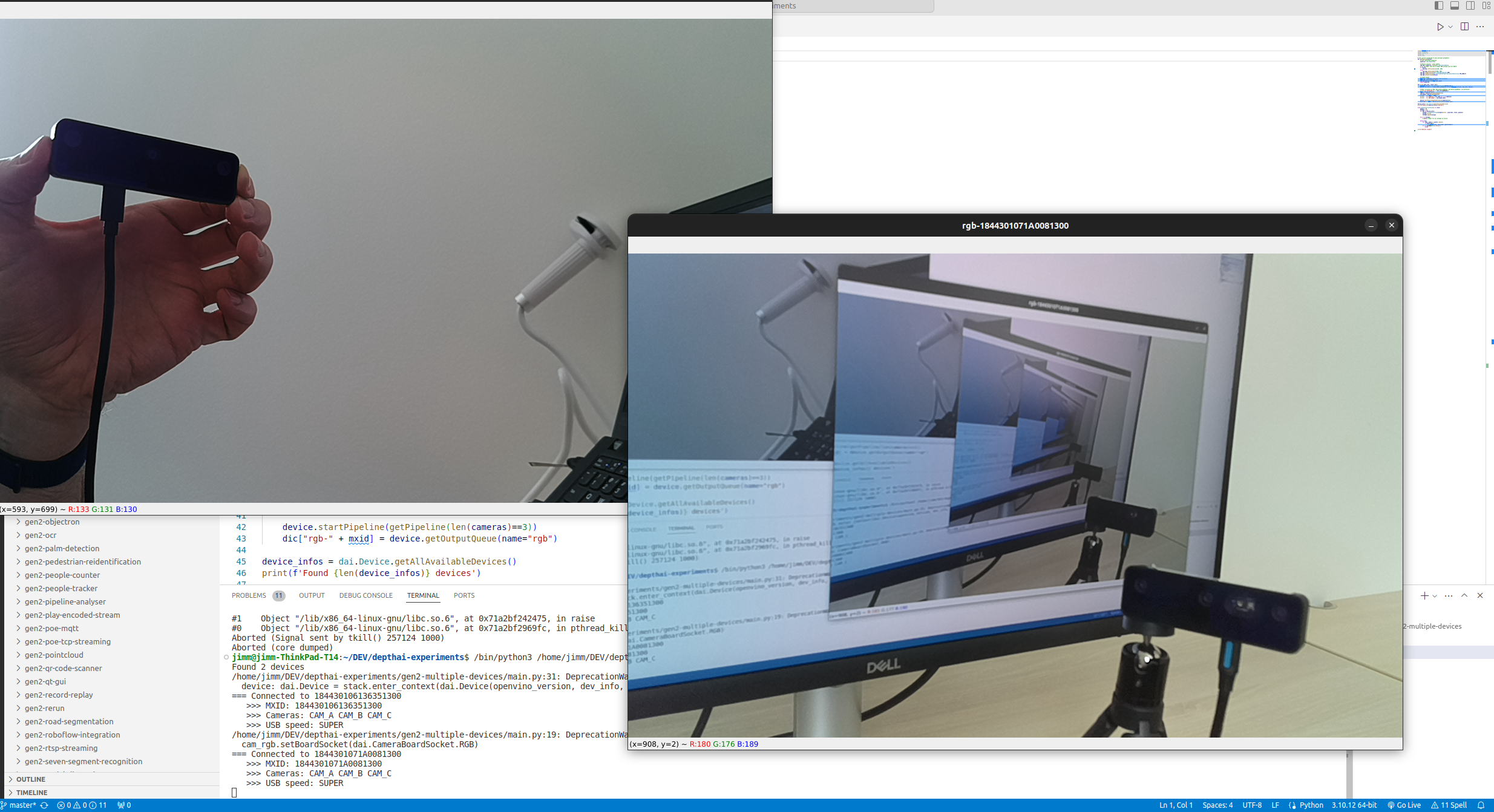

Multi-Device Setup

Discovering OAK cameras

MxIDs (unique identifier) and their XLink state.Python

1import depthai

2for device in depthai.Device.getAllAvailableDevices():

3 print(f"{device.getMxId()} {device.state}")Command Line

114442C10D13EABCE00 XLinkDeviceState.X_LINK_UNBOOTED

214442C1071659ACD00 XLinkDeviceState.X_LINK_UNBOOTEDSelecting a Specific DepthAI device to be used

Python

1# Specify MXID, IP Address or USB path

2device_info = depthai.DeviceInfo("14442C108144F1D000") # MXID

3#device_info = depthai.DeviceInfo("192.168.1.44") # IP Address

4#device_info = depthai.DeviceInfo("3.3.3") # USB port name

5with depthai.Device(pipeline, device_info) as device:

6 # ...Specifying POE device to be used

Timestamp syncing

DepthAI 2.24 introduces Sync node which can be used to sync messages from different streams, or messages from different sensors (eg. IMU and color frames). See Sync node for more details. The sync node does not currently support multiple device syncing, so if you want to sync messages from multiple devices, you should use the manual approach.

Point cloud fusion

Point cloud fusion on GitHub

Multi camera calibration

Multiple camera calibration on GitHub