Nodes

Nodes

Node entry. You can click on them to find out more.Inputs and outputs

Node input

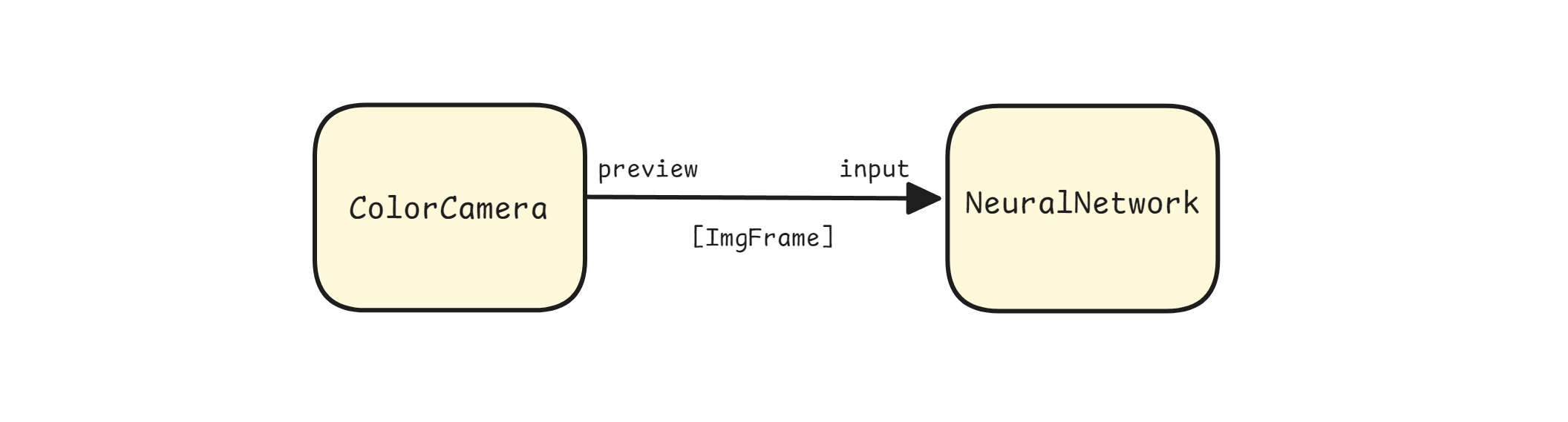

input.setBlocking(bool) and input.setQueueSize(num), eg. edgeDetector.inputImage.setQueueSize(10). If the input queue fills up, behavior of the input depends on blocking attribute.Let's say we have linked ColorCamera preview output with NeuralNetwork input input.

Node output

Pipeline freezing

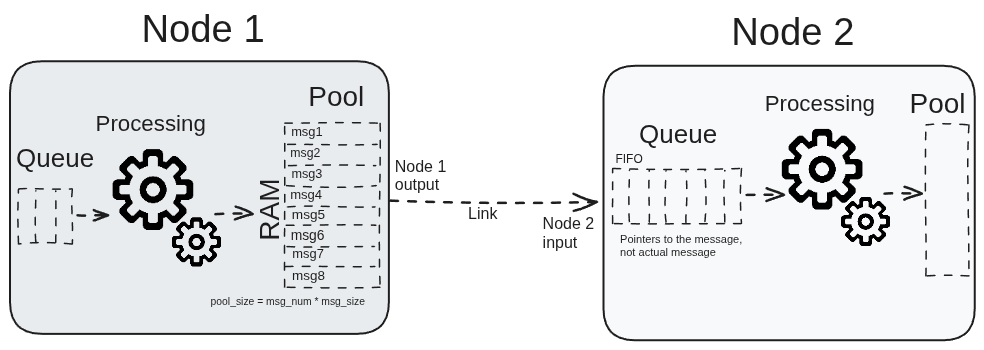

When all the messages from pool are sent out and none yet returned, that's when the node will block (freeze) and wait until a message is released (not used by any node in the pipeline).

class

depthai.Node

class

Connection

Connection between an Input and Output

class

class

Id

Node identificator. Unique for every node on a single Pipeline

class

class

class

class

variable

method

method

method

getInputs(self) -> list[Node.Input]: list[Node.Input]Retrieves all nodes inputs

method

getName(self) -> str: strRetrieves nodes name

method

method

getOutputs(self) -> list[Node.Output]: list[Node.Output]Retrieves all nodes outputs

method

property

id

Id of node

class

depthai.Node.Connection

variable

variable

variable

variable

property

method

property

method

class

depthai.Node.DatatypeHierarchy

variable

variable

method

class

depthai.Node.Input

class

Type

Members: SReceiver MReceiver

variable

variable

variable

variable

method

getBlocking(self) -> bool: boolGet input queue behavior Returns: True blocking, false overwriting

method

method

getQueueSize(self) -> int: intGet input queue size. Returns: Maximum input queue size

method

getReusePreviousMessage(self) -> bool: boolEquivalent to getWaitForMessage but with inverted logic.

method

getWaitForMessage(self) -> bool: boolGet behavior whether to wait for this input when a Node processes certain data or not Returns: Whether to wait for message to arrive to this input or not

method

setBlocking(self, blocking: bool)Overrides default input queue behavior. Parameter ``blocking``: True blocking, false overwriting

method

setQueueSize(self, size: typing.SupportsInt)Overrides default input queue size. If queue size fills up, behavior depends on `blocking` attribute Parameter ``size``: Maximum input queue size

method

setReusePreviousMessage(self, reusePreviousMessage: bool)Equivalent to setWaitForMessage but with inverted logic.

method

setWaitForMessage(self, waitForMessage: bool)Overrides default wait for message behavior. Applicable for nodes with multiple inputs. Specifies behavior whether to wait for this input when a Node processes certain data or not. Parameter ``waitForMessage``: Whether to wait for message to arrive to this input or not

property

method

class

depthai.Node.Input.Type

variable

variable

variable

method

method

method

method

method

method

method

method

method

method

property

property

class

depthai.Node.InputMap

method

__bool__(self) -> bool: boolCheck whether the map is nonempty

method

method

method

method

method

method

method

class

depthai.Node.Output

class

Type

Members: MSender SSender

variable

variable

variable

method

canConnect(self, input: Node.Input) -> bool: boolCheck if connection is possible Parameter ``in``: Input to connect to Returns: True if connection is possible, false otherwise

method

getConnections(self) -> list[Node.Connection]: list[Node.Connection]Retrieve all connections from this output Returns: Vector of connections

method

method

isSamePipeline(self, input: Node.Input) -> bool: boolCheck if this output and given input are on the same pipeline. See also: canConnect for checking if connection is possible Returns: True if output and input are on the same pipeline

method

link(self, input: Node.Input)Link current output to input. Throws an error if this output cannot be linked to given input, or if they are already linked Parameter ``in``: Input to link to

method

unlink(self, input: Node.Input)Unlink a previously linked connection Throws an error if not linked. Parameter ``in``: Input from which to unlink from

property

method

class

depthai.Node.Output.Type

variable

variable

variable

method

method

method

method

method

method

method

method

method

method

property

property

class

depthai.Node.OutputMap

method

__bool__(self) -> bool: boolCheck whether the map is nonempty

method

method

method

method

method

method

method