DepthAI v2

Quick links to get started with the DepthAI softwareSoftware ecosystem

Software ecosystem that's built on top of the DepthAI APIDepthAI API

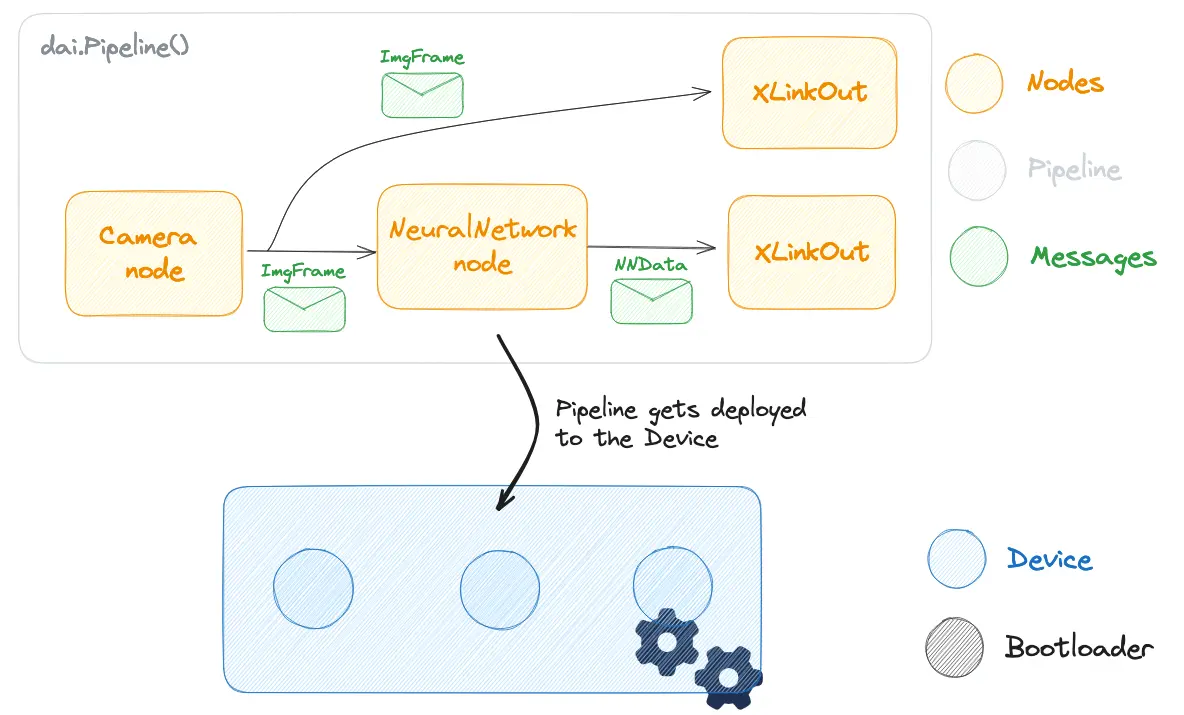

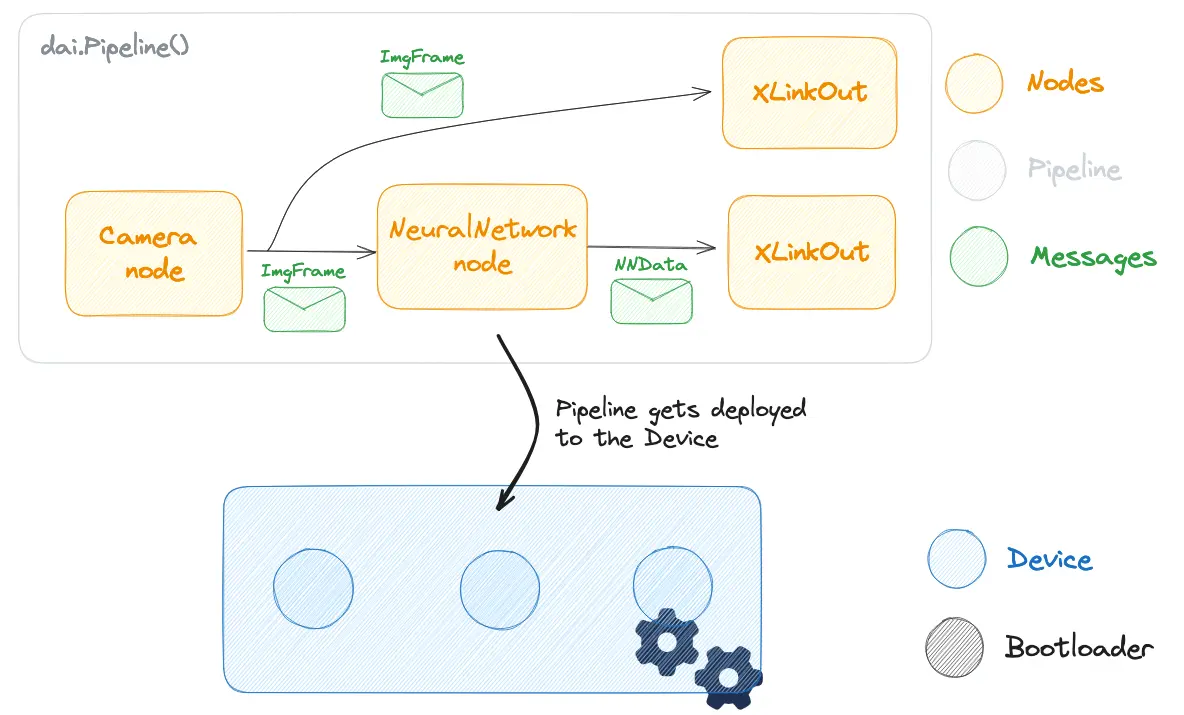

DepthAI API (Python, C++) allows users to develop and deploy vision pipelines that run on the accelerated hardware blocks on our devices.

DepthAI Components

- Nodes represent a sensor, accelerated hardware, or some compute function

- Pipeline consists of linked nodes and gets deployed to the device where it runs on accelerated hardware blocks

- Messages are used for communication between nodes. They hold data and metadata

- Device represents Luxonis' device, either an OAK camera or a RAE. It handles connectivity and communication

- Bootloader handles logic when booting the device and makes device accessible for connection