ToF

Supported on:RVC2

ToF node converts raw Time-of-Flight sensor data into depth and exposes both base and filtered outputs. It is available on devices with integrated ToF sensors, such as:ToF depth can be used directly with spatial nodes, for example SpatialDetectionNetwork and SpatialLocationCalculator.For depth quality comparisons with stereo, see ToF depth accuracy.How to place it

Python

Python

1pipeline = dai.Pipeline()

2tof = pipeline.create(dai.node.ToF)Inputs and Outputs

DepthAI v3 ToF architecture

- ToF base decode Converts sensor measurements into base signals (

rawDepth,amplitude,intensity,phase) and handles ToF-specific decode settings such as phase unwrapping. - Filtering stage Produces filtered depth output (

depth) using ToF-oriented filtering presets and runtime filter configuration.

Setup differences on ToF cameras

CAM_* sockets). ToF cameras are typically used as a ToF + color camera setup (two functional camera sources), where depth comes from dai.node.ToF, not from StereoDepth.Common integration pitfall in generic apps:- Do not treat the ToF sensor as a normal color preview source.

- Do not auto-create default mono stereo-pair streams on ToF-only devices.

- Build depth from

ToF, and add a color stream only if needed (for example, alignment/overlay).

ToF settings

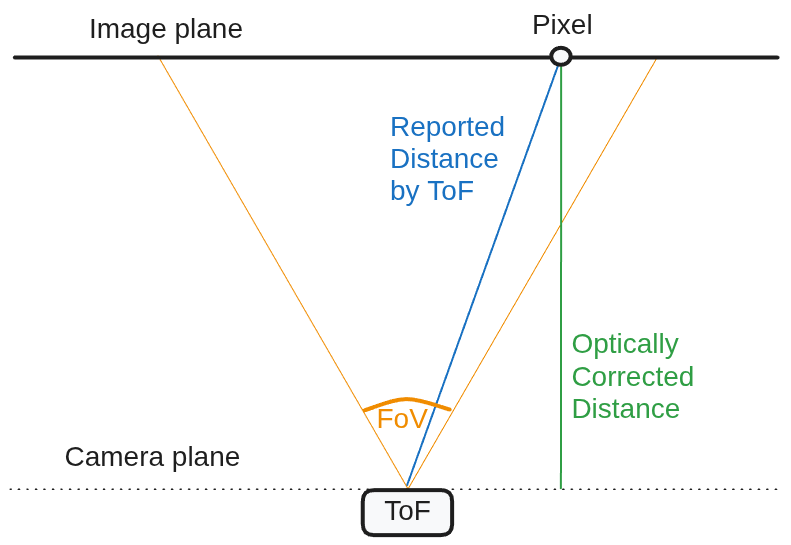

ToFConfig):- Optical correction: converts radial distance to depth (Z-map) so behavior matches stereo depth usage.

- Undistortion:

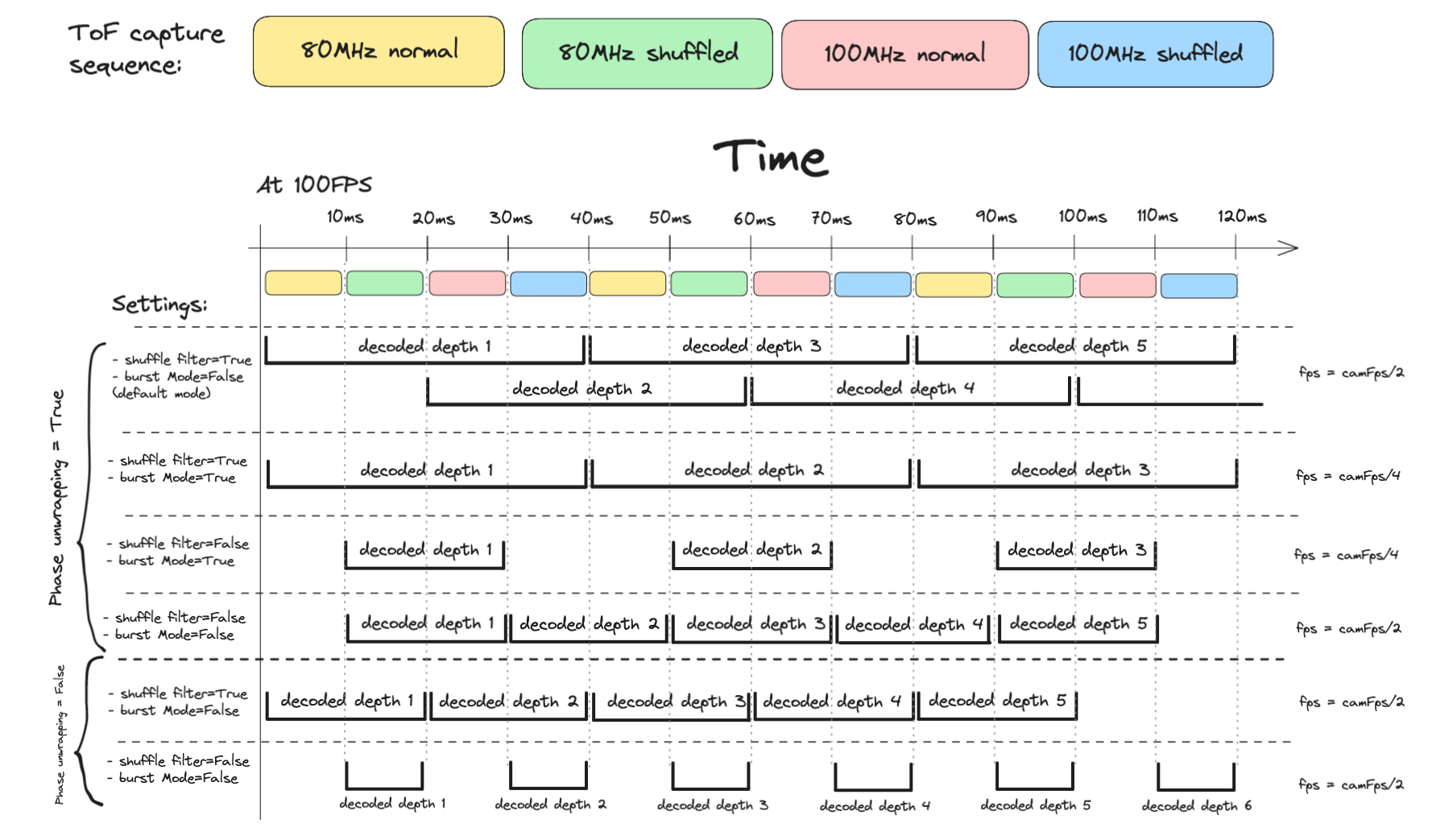

depthandamplitudeare undistorted by default. - Phase unwrapping: extends range at the cost of more noise.

- Phase shuffle temporal filter: reduces noise by combining shuffled/non-shuffled captures.

- Burst mode: avoids frame reuse in decode, trading output rate for reduced motion artifacts.

0(disabled): up to ~1.87 m (80 MHz)1: up to ~3.0 m2: up to ~4.5 m3: up to ~6.0 m4: up to ~7.5 m

ImageFilters and ImageFiltersConfig. For confidence-driven cleanup, see ToFDepthConfidenceFilterConfig.

Quick Tuning Guide: Depth Image Filters

delta is typically interpreted in depth units (usually millimeters), while alpha is a blending factor in [0.0, 1.0]. Treat all values below as starting points and tune for your scene.Step 1: Blank slate

Step 2: Temporal filter (start here)

TemporalFilterParams first because it controls dynamic smoothing over time.delta: threshold for deciding whether a depth change is significant vs noise.- Start with

delta = 15. - For larger objects (for example ~50 mm object height), use

delta = 25so motion updates quickly. - For small objects, tighten to

delta = 10.

- Start with

alpha: blending weight of current frame vs history.- Lower

alpha(for example0.1) gives smoother output but can introduce lag/trails. - Higher

alpha(for example0.4) updates faster but smooths less.

- Lower

- Important:

delta = 0makes nearly every change significant and largely bypasses temporal blending.

Step 3: Speckle filter

SpeckleFilterParams to remove isolated blobs and salt-and-pepper-like artifacts in single frames.differenceThreshold(boss alias:max_diff): threshold for grouping neighboring pixels into the same region.speckleRange(boss alias:max_speckle_size): maximum region size to remove.- Tuning strategy: gradually increase

speckleRangeto remove larger floating blobs, but avoid values that delete real small objects.

Step 4: Spatial filter

SpatialFilterParams to smooth object surfaces while preserving edges.alpha: smoothing intensity (lower values usually smooth more).delta: edge-preservation threshold; larger depth jumps are treated as edges and are not blended.holeFillingRadius: fills invalid (0) pixels from surrounding valid data.numIterations: more passes can refine output at slight processing cost.

Step 5: Median filter

MedianFilterParams for final aggressive cleanup when residual salt-and-pepper noise remains.- Supported modes in this node:

MEDIAN_OFF,KERNEL_3x3,KERNEL_5x5. - Use the smallest kernel that solves the noise to minimize edge/detail loss.

Tuning quick reference

| Filter | Key Parameters | Primary Use Case | Trade-off |

|---|---|---|---|

| Temporal | delta, alpha | Smoothing dynamic noise over time | Smoothness vs motion lag |

| Speckle | speckleRange, differenceThreshold | Removing isolated blobs/noise | Cleanliness vs deleting small objects |

| Spatial | delta, alpha, holeFillingRadius, numIterations | Surface smoothing with edge preservation | Smoothness vs processing cost |

| Median | KERNEL_3x3, KERNEL_5x5 | Aggressive salt-and-pepper cleanup | Noise removal vs edge/detail blur |

ImageFilters, ImageFiltersConfig, and ToFDepthConfidenceFilterConfig.ToF motion blur

- Increase sensor FPS (up to 160 FPS), while accounting for higher system load and reduced exposure time.

- Disable phase shuffle temporal filter (higher noise).

- Disable phase unwrapping (reduces max range).

- Enable burst mode when applicable.

Max distance

Math

1c = 299792458.0 # speed of light in m/s

2

3MAX_80MHZ_M = c / (80000000 * 2) = 1.873 m

4MAX_100MHZ_M = c / (100000000 * 2) = 1.498 m

5

6MAX_DIST_80MHZ_M = (phaseUnwrappingLevel + 1) * 1.873 + (phaseUnwrapErrorThreshold / 2)

7MAX_DIST_100MHZ_M = (phaseUnwrappingLevel + 1) * 1.498 + (phaseUnwrapErrorThreshold / 2)ToF FPS

Usage

Python

Python

1pipeline = dai.Pipeline()

2

3socket = dai.CameraBoardSocket.AUTO

4preset = dai.ImageFiltersPresetMode.TOF_MID_RANGE

5tof = pipeline.create(dai.node.ToF).build(socket, preset)

6

7# Base and filtered outputs

8raw_depth_q = tof.rawDepth.createOutputQueue()

9depth_q = tof.depth.createOutputQueue()

10

11# Runtime configuration queues (where available in the selected API binding)

12base_cfg_q = tof.tofBaseInputConfig.createInputQueue()

13filters_cfg_q = tof.imageFiltersInputConfig.createInputQueue()Examples

Reference

class

dai::node::ToF

variable

variable

Subnode< ImageFilters > imageFilters

variable

variable

Output & depth

Filtered depth output

variable

Output & amplitude

Amplitude output

variable

Output & intensity

Intensity output

variable

Output & phase

Phase output

variable

variable

Input & imageFiltersInputConfig

Input config for image filters

variable

variable

ImageFilters & imageFiltersNode

Image filters node

inline function

ToF(const std::shared_ptr< Device > & device)function

~ToF()inline function

void buildInternal()inline function

std::shared_ptr< ToF > build(dai::CameraBoardSocket boardSocket, dai::ImageFiltersPresetMode presetMode, std::optional< float > fps)Need assistance?

Head over to Discussion Forum for technical support or any other questions you might have.