Camera

InputControl and InputConfigIt aims to unify the Color Camera and MonoCamera into one node.Compared to Color Camera node, Camera node:- Supports cam.setSize(), which replaces both

cam.setResolution()andcam.setIspScale(). Camera node will automatically find resolution that fits best, and apply correct scaling to achieve user-selected size - Supports cam.setCalibrationAlpha(), example here: Undistort camera stream

- Supports cam.loadMeshData() and cam.setMeshStep(), which can be used for custom image warping (undistortion, perspective correction, etc.)

- Automatically undistorts camera stream if HFOV of the camera is greater than 85°. You can disable this with:

cam.setMeshSource(dai.CameraProperties.WarpMeshSource.NONE).

- Doesn't have

outoutput, as it has the same outputs as ColorCamera (raw,isp,still,preview,video). This means thatpreviewwill output 3 planes of the same grayscale frame (3x overhead), andisp/video/stillwill output luma (useful grayscale information) + chroma (all values are 128), which will result in 1.5x bandwidth overhead

How to place it

Python

C++

Python

Python

1pipeline = dai.Pipeline()

2cam = pipeline.create(dai.node.Camera)Inputs and Outputs

Detailed node connections

inputConfig- ImageManipConfiginputControl- CameraControlraw- ImgFrame - RAW10 bayer data. Demo code for unpacking hereisp- ImgFrame - YUV420 planar (same as YU12/IYUV/I420)still- ImgFrame - NV12, suitable for bigger size frames. The image gets created when a capture event is sent to the Camera, so it's like taking a photopreview- ImgFrame - RGB (or BGR planar/interleaved if configured), mostly suited for small size previews and to feed the image into NeuralNetworkvideo- ImgFrame - NV12, suitable for bigger size frames

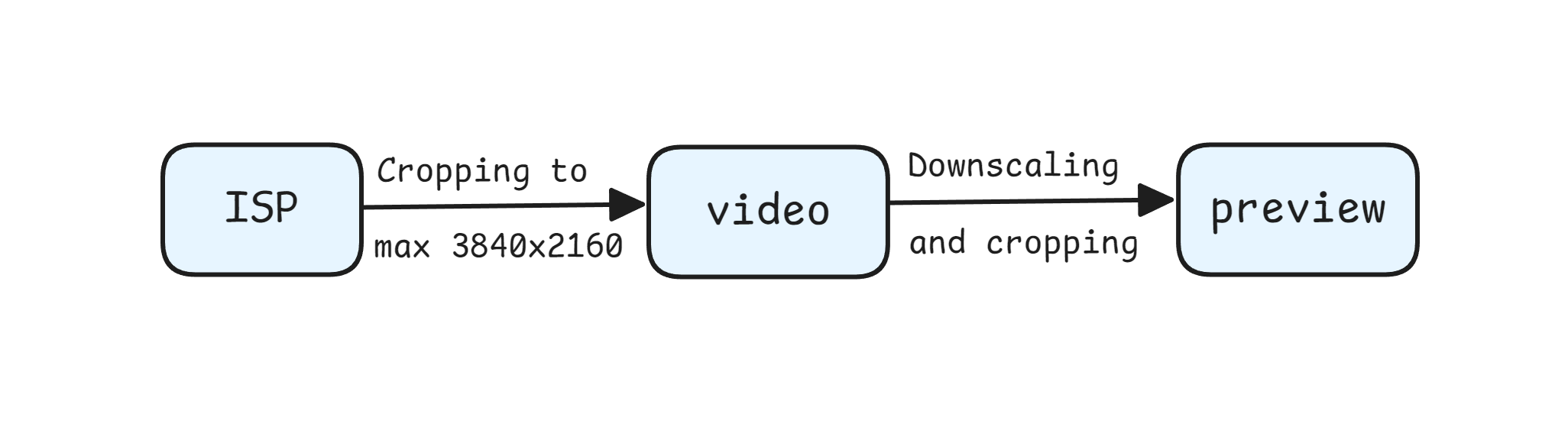

video/preview/still frames.still (when a capture is triggered) and isp work at the max camera resolution, while video and preview are limited to max 4K (3840 x 2160) resolution, which is cropped from isp. For IMX378 (12MP), the post-processing works like this:

The image above is the

The image above is the isp output from the Camera (12MP resolution from IMX378). If you aren't downscaling ISP, the video output is cropped to 4k (max 3840x2160 due to the limitation of the video output) as represented by the blue rectangle. The Yellow rectangle represents a cropped preview output when the preview size is set to a 1:1 aspect ratio (eg. when using a 300x300 preview size for the MobileNet-SSD NN model) because the preview output is derived from the video output.Usage

Python

C++

Python

Python

1pipeline = dai.Pipeline()

2cam = pipeline.create(dai.node.Camera)

3cam.setPreviewSize(300, 300)

4cam.setBoardSocket(dai.CameraBoardSocket.CAM_A)

5# Instead of setting the resolution, user can specify size, which will set

6# sensor resolution to best fit, and also apply scaling

7cam.setSize(1280, 720)3A Algorithms

- Stereo Cameras: Sensors share the same I2C bus, ensuring synchronized 3A settings automatically (AWB, AE).

- Independent Sensors: On setups like OAK FFC or OAK-D-LR, where each sensor has its own I2C, the

3a-followfeature can be used to synchronize 3A settings from one sensor to others.

Python

1cam['cam_b'].initialControl.setMisc("3a-follow", dai.CameraBoardSocket.CAM_A)

2cam['cam_c'].initialControl.setMisc("3a-follow", dai.CameraBoardSocket.CAM_A)3a-follow feature copies the 3A settings (exposure, ISO, and white balance) from a primary camera (e.g., CAM_A) to other cameras in the setup (e.g., CAM_B and CAM_C).Limitations

- ISP can process about 600 MP/s, and about 500 MP/s when the pipeline is also running NNs and video encoder in parallel

- 3A algorithms can process about 200..250 FPS overall (for all camera streams). This is a current limitation of our implementation, and we have plans for a workaround to run 3A algorithms on every Xth frame, no ETA yet

Examples of functionality

Reference

class

depthai.node.Camera(depthai.Node)

method

getBoardSocket(self) -> depthai.CameraBoardSocket: depthai.CameraBoardSocketRetrieves which board socket to use Returns: Board socket to use

method

getCalibrationAlpha(self) -> float|None: float|NoneGet calibration alpha parameter that determines FOV of undistorted frames

method

getCamera(self) -> str: strRetrieves which camera to use by name Returns: Name of the camera to use

method

getFps(self) -> float: floatGet rate at which camera should produce frames Returns: Rate in frames per second

method

getHeight(self) -> int: intGet sensor resolution height

method

getImageOrientation(self) -> depthai.CameraImageOrientation: depthai.CameraImageOrientationGet camera image orientation

method

getMeshSource(self) -> depthai.CameraProperties.WarpMeshSource: depthai.CameraProperties.WarpMeshSourceGets the source of the warp mesh

method

getMeshStep(self) -> tuple[int, int]: tuple[int, int]Gets the distance between mesh points

method

getPreviewHeight(self) -> int: intGet preview height

method

getPreviewSize(self) -> tuple[int, int]: tuple[int, int]Get preview size as tuple

method

getPreviewWidth(self) -> int: intGet preview width

method

getSize(self) -> tuple[int, int]: tuple[int, int]Get sensor resolution as size

method

getStillHeight(self) -> int: intGet still height

method

getStillSize(self) -> tuple[int, int]: tuple[int, int]Get still size as tuple

method

getStillWidth(self) -> int: intGet still width

method

getVideoHeight(self) -> int: intGet video height

method

getVideoSize(self) -> tuple[int, int]: tuple[int, int]Get video size as tuple

method

getVideoWidth(self) -> int: intGet video width

method

getWidth(self) -> int: intGet sensor resolution width

method

loadMeshData(self, warpMesh: typing_extensions.Buffer)Specify mesh calibration data for undistortion See `loadMeshFiles` for the expected data format

method

loadMeshFile(self, warpMesh: Path)Specify local filesystem paths to the undistort mesh calibration files. When a mesh calibration is set, it overrides the camera intrinsics/extrinsics matrices. Overrides useHomographyRectification behavior. Mesh format: a sequence of (y,x) points as 'float' with coordinates from the input image to be mapped in the output. The mesh can be subsampled, configured by `setMeshStep`. With a 1280x800 resolution and the default (16,16) step, the required mesh size is: width: 1280 / 16 + 1 = 81 height: 800 / 16 + 1 = 51

method

setBoardSocket(self, boardSocket: depthai.CameraBoardSocket)Specify which board socket to use Parameter ``boardSocket``: Board socket to use

method

setCalibrationAlpha(self, alpha: typing.SupportsFloat)Set calibration alpha parameter that determines FOV of undistorted frames

method

setCamera(self, name: str)Specify which camera to use by name Parameter ``name``: Name of the camera to use

method

setFps(self, fps: typing.SupportsFloat)Set rate at which camera should produce frames Parameter ``fps``: Rate in frames per second

method

setImageOrientation(self, imageOrientation: depthai.CameraImageOrientation)Set camera image orientation

method

setIsp3aFps(self, isp3aFps: typing.SupportsInt)Isp 3A rate (auto focus, auto exposure, auto white balance, camera controls etc.). Default (0) matches the camera FPS, meaning that 3A is running on each frame. Reducing the rate of 3A reduces the CPU usage on CSS, but also increases the convergence rate of 3A. Note that camera controls will be processed at this rate. E.g. if camera is running at 30 fps, and camera control is sent at every frame, but 3A fps is set to 15, the camera control messages will be processed at 15 fps rate, which will lead to queueing.

method

setMeshSource(self, source: depthai.CameraProperties.WarpMeshSource)Set the source of the warp mesh or disable

method

setMeshStep(self, width: typing.SupportsInt, height: typing.SupportsInt)Set the distance between mesh points. Default: (32, 32)

method

method

setRawOutputPacked(self, packed: bool)Configures whether the camera `raw` frames are saved as MIPI-packed to memory. The packed format is more efficient, consuming less memory on device, and less data to send to host: RAW10: 4 pixels saved on 5 bytes, RAW12: 2 pixels saved on 3 bytes. When packing is disabled (`false`), data is saved lsb-aligned, e.g. a RAW10 pixel will be stored as uint16, on bits 9..0: 0b0000'00pp'pppp'pppp. Default is auto: enabled for standard color/monochrome cameras where ISP can work with both packed/unpacked, but disabled for other cameras like ToF.

method

method

method

property

frameEvent

Outputs metadata-only ImgFrame message as an early indicator of an incoming frame. It's sent on the MIPI SoF (start-of-frame) event, just after the exposure of the current frame has finished and before the exposure for next frame starts. Could be used to synchronize various processes with camera capture. Fields populated: camera id, sequence number, timestamp

property

initialControl

Initial control options to apply to sensor

property

inputConfig

Input for ImageManipConfig message, which can modify crop parameters in runtime Default queue is non-blocking with size 8

property

inputControl

Input for CameraControl message, which can modify camera parameters in runtime Default queue is blocking with size 8

property

isp

Outputs ImgFrame message that carries YUV420 planar (I420/IYUV) frame data. Generated by the ISP engine, and the source for the 'video', 'preview' and 'still' outputs

property

preview

Outputs ImgFrame message that carries BGR/RGB planar/interleaved encoded frame data. Suitable for use with NeuralNetwork node

property

raw

Outputs ImgFrame message that carries RAW10-packed (MIPI CSI-2 format) frame data. Captured directly from the camera sensor, and the source for the 'isp' output.

property

still

Outputs ImgFrame message that carries NV12 encoded (YUV420, UV plane interleaved) frame data. The message is sent only when a CameraControl message arrives to inputControl with captureStill command set.

property

video

Outputs ImgFrame message that carries NV12 encoded (YUV420, UV plane interleaved) frame data. Suitable for use with VideoEncoder node

Need assistance?

Head over to Discussion Forum for technical support or any other questions you might have.