Spatial AI

Neural inference fused with depth map

3D Object Localization

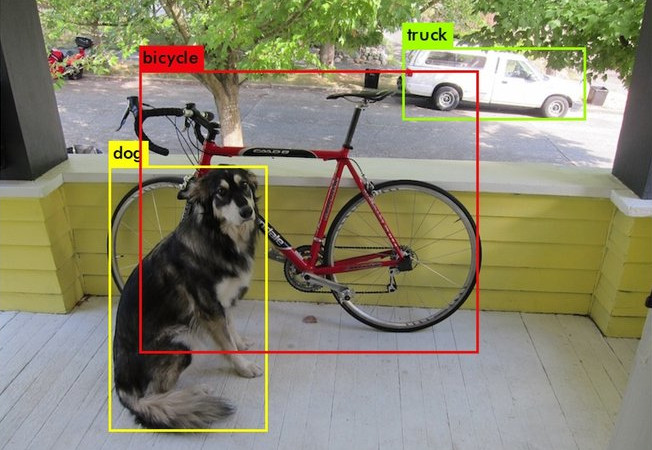

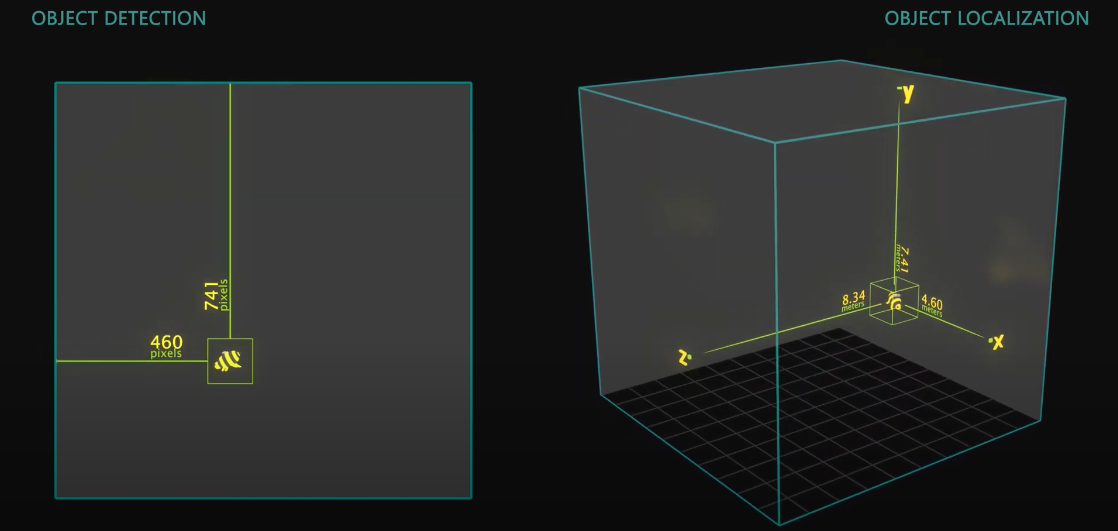

3D Object Localization (or 3D Object Detection) is all about finding objects in physical space instead of pixel space. It is useful when measuring or interacting with the physical world in real-time.Below is a visualization to showcase the difference between Object Detection and 3D Object Localization:

3D Object Localization (or 3D Object Detection) is all about finding objects in physical space instead of pixel space. It is useful when measuring or interacting with the physical world in real-time.Below is a visualization to showcase the difference between Object Detection and 3D Object Localization: DepthAI extends these 2D neural networks (eg. MobileNet, Yolo) with spatial information to give them 3D context.

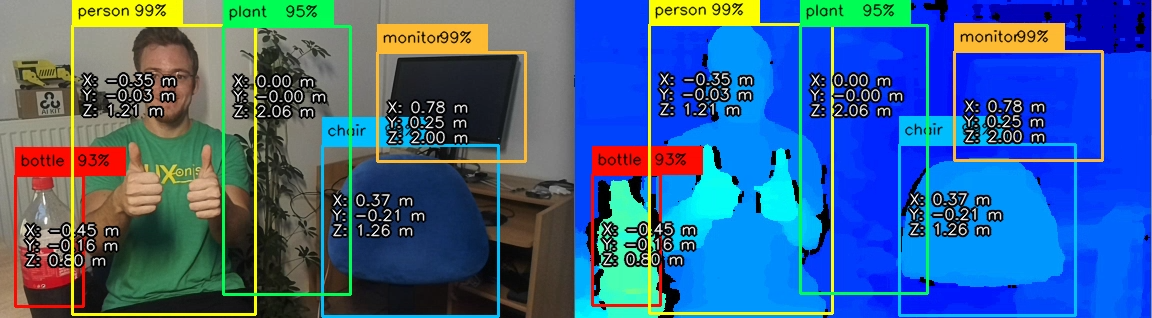

DepthAI extends these 2D neural networks (eg. MobileNet, Yolo) with spatial information to give them 3D context. In the image above, a DepthAI application runs a MobileNet object detector on RGB camera stream and fuses them with the depth map [RGB-D] to estimate the 3D position of each detected object (see MobileNetSpatialDetectionNetwork node for more details).

In the image above, a DepthAI application runs a MobileNet object detector on RGB camera stream and fuses them with the depth map [RGB-D] to estimate the 3D position of each detected object (see MobileNetSpatialDetectionNetwork node for more details).NeuralNetwork decoding

The DepthAI API provides a simple way to decode the neural network results, including the bounding boxes, labels, and confidence scores. This is possible for Yolo and MobileNet neural network architectures. For any custom neural network, you can use the standard NeuralNetwork node, for which you will need to decode the results yourself.

3D Landmark Localization

Demos: hand landmark (above), human pose landmark, and facial landmark detection demos.

Demos: hand landmark (above), human pose landmark, and facial landmark detection demos.Semantic depth

In such a "negative" system, the semantic segmentation system is trained on all the surfaces that are not objects. So anything that is not that surface is considered an object - allowing the navigation to know its location and take commensurate action (stop, go around, turn around, etc.). So the semantic depth is extremely valuable for object avoidance and navigation planning applications.In the image above, a person semantic segmentation model is running on RGB frames, and, based on the results, it crops depth maps only to include the person's depth.

In such a "negative" system, the semantic segmentation system is trained on all the surfaces that are not objects. So anything that is not that surface is considered an object - allowing the navigation to know its location and take commensurate action (stop, go around, turn around, etc.). So the semantic depth is extremely valuable for object avoidance and navigation planning applications.In the image above, a person semantic segmentation model is running on RGB frames, and, based on the results, it crops depth maps only to include the person's depth. In the example above, autonomous lawn mower would only navigate around grass areas, and avoid everything else (trees, roots, manholes, paths, etc).

In the example above, autonomous lawn mower would only navigate around grass areas, and avoid everything else (trees, roots, manholes, paths, etc).Stereo neural inference

For more information, check out the Stereo neural inference demo.Examples include finding the 3D locations of:

For more information, check out the Stereo neural inference demo.Examples include finding the 3D locations of:- Facial landmarks (eyes, ears, nose, edges of the mouth, etc.)

- Features on a product (screw holes, blemishes, etc.)

- Joints on a person (e.g., elbow, knees, hips, etc.)

- Features on a vehicle (e.g. mirrors, headlights, etc.)

- Pests or disease on a plant (i.e., features that are too small for object detection + stereo depth)

Need assistance?

Head over to Discussion Forum for technical support or any other questions you might have.