Run your own CV functions on-device¶

As mentioned in On-device programming, you can create custom CV models with your favorite NN library, convert & compile it into the

.blob and run it on the device. This tutorial will cover how to do just that.

If you are interested in training & deploying your own AI models, refer to Custom training.

Demos:

Frame concatenation - using PyTorch

Laplacian edge detection - using Kornia

Frame blurring - using Kornia

Tutorial on running custom models on OAK by Rahul Ravikumar

Harris corner detection in PyTorch by Kunal Tyagi

Create a custom model with PyTorch¶

TL;DR if you are interested in implementation code, it’s here.

Create PyTorch NN module

We first need to create a Python class that extends PyTorch’s nn.Module. We can then put our NN logic into the

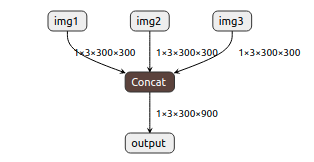

forwardfunction of the created class. In the example of frame concatenation, we can use torch.cat function to concatenate multiple frames:class CatImgs(nn.Module): def forward(self, img1, img2, img3): return torch.cat((img1, img2, img3), 3)

For a more complex module, please refer to Harris corner detection in PyTorch demo by Kunal Tyagi.

Keep in mind that VPU supports only FP16, which means that max value is 65504. When multiplying a few values you can quickly overflow if you don’t properly normalize/divide values.

Export the NN module to onnx

Since PyTorch isn’t directly supported by OpenVINO, we first need to export the model to onnx format and then to OpenVINO. PyTorch has integrated support for onnx, so exporting to onnx is as simple as:

# For 300x300 frames X = torch.ones((1, 3, 300, 300), dtype=torch.float32) torch.onnx.export( CatImgs(), (X, X, X), # Dummy input for shape "path/to/model.onnx", opset_version=12, do_constant_folding=True, )

This will export the concatenate model into onnx format. We can visualize the created model using Netron app:

Simplify onnx model

When exporting the model to onnx, PyTorch isn’t very efficient. It creates tons unnecessary operations/layers which increases the size of your network (which can lead to lower FPS). That’s why we recommend using onnx-simplifier, a simple python package that removes unnecessary operations/layers.

import onnx from onnxsim import simplify onnx_model = onnx.load("path/to/model.onnx") model_simpified, check = simplify(onnx_model) onnx.save(model_simpified, "path/to/simplified/model.onnx")

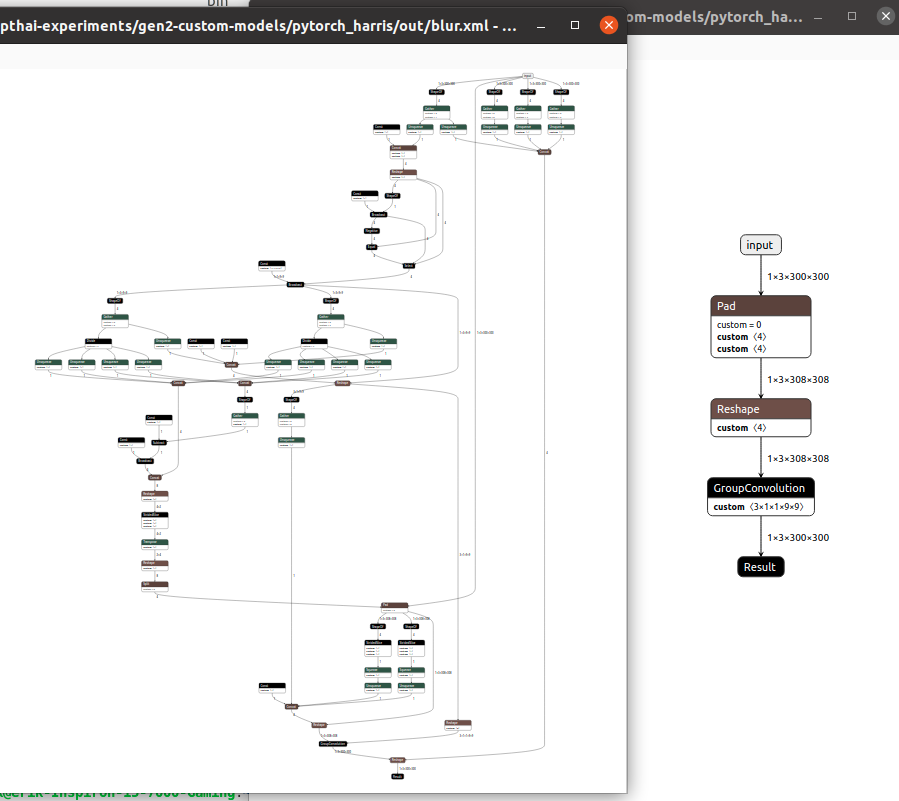

Here is an example of how significant was the simplification using the onnx-simplifier. On the left, there’s a blur model (from Kornia) exported directly from PyTorch, and on the right, there’s a simplified network of the same functionality:

Convert to OpenVINO/blob

Now that we have (simplified) onnx model, we can convert it to OpenVINO and then to the

.blobformat. For additional information about converting models, see Converting model to MyriadX blob.This would usually be done first by using OpenVINO’s model optimizer to convert from onnx to IR format (.bin/.xml) and then using Compile tool to compile to

.blob. But we could also use blobconverter to convert from onnx directly to .blob.Blobconverter just does both of these steps at once - without the need of installing OpenVINO. You can compile your onnx model like this:

import blobconverter blobconverter.from_onnx( model="/path/to/model.onnx", output_dir="/path/to/output/model.blob", data_type="FP16", shaves=6, use_cache=False, optimizer_params=[] )

Use the .blob in your pipeline

You can now use your

.blobmodel with the NeuralNetwork node. Check depthai-experiments/custom-models to run the demo applications that use these custom models.

Kornia¶

Kornia, “State-of-the-art and curated Computer Vision algorithms for AI.”, has a set of common computer vision algorithms implemented in PyTorch. This allows users to do something similar to:

import kornia

class Model(nn.Module):

def forward(self, image):

return kornia.filters.gaussian_blur2d(image, (9, 9), (2.5, 2.5))

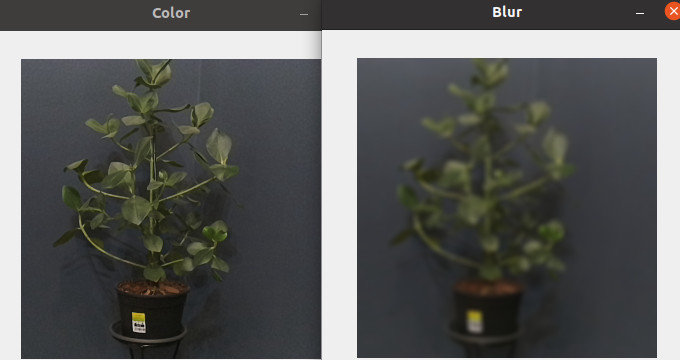

and use the exact same procedure as described in Create a custom model with PyTorch to achieve frame blurring, as shown below:

Note

during our testing, we have found that several algorithms aren’t supported by either the OpenVINO framework or by the VPU. We have submitted an Issue for Sobel filter already.