Feature Detector

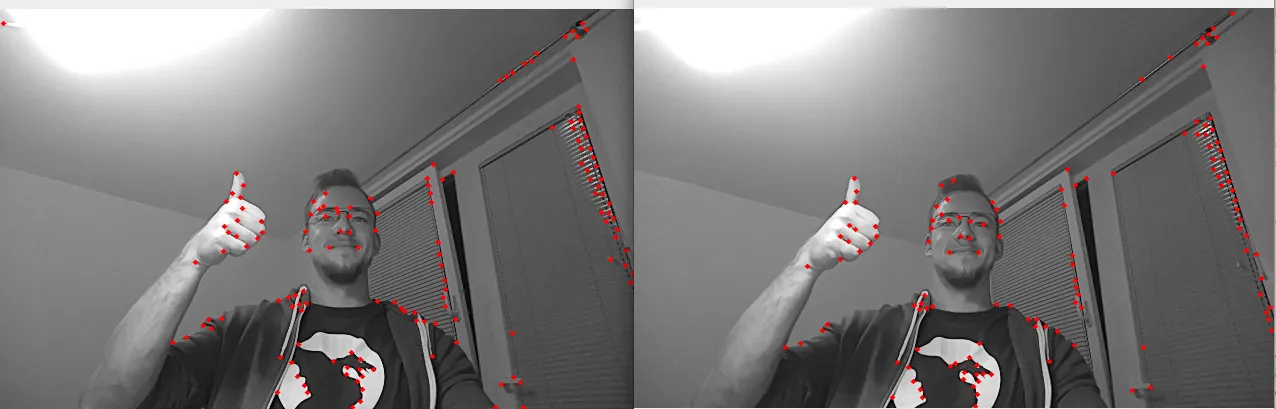

Demo

Setup

Command Line

1git clone https://github.com/luxonis/depthai-python.git

2cd depthai-python/examples

3python3 install_requirements.pySource code

Python

PythonGitHub

1#!/usr/bin/env python3

2

3import cv2

4import depthai as dai

5

6

7# Create pipeline

8pipeline = dai.Pipeline()

9

10# Define sources and outputs

11monoLeft = pipeline.create(dai.node.MonoCamera)

12monoRight = pipeline.create(dai.node.MonoCamera)

13featureTrackerLeft = pipeline.create(dai.node.FeatureTracker)

14featureTrackerRight = pipeline.create(dai.node.FeatureTracker)

15

16xoutPassthroughFrameLeft = pipeline.create(dai.node.XLinkOut)

17xoutTrackedFeaturesLeft = pipeline.create(dai.node.XLinkOut)

18xoutPassthroughFrameRight = pipeline.create(dai.node.XLinkOut)

19xoutTrackedFeaturesRight = pipeline.create(dai.node.XLinkOut)

20xinTrackedFeaturesConfig = pipeline.create(dai.node.XLinkIn)

21

22xoutPassthroughFrameLeft.setStreamName("passthroughFrameLeft")

23xoutTrackedFeaturesLeft.setStreamName("trackedFeaturesLeft")

24xoutPassthroughFrameRight.setStreamName("passthroughFrameRight")

25xoutTrackedFeaturesRight.setStreamName("trackedFeaturesRight")

26xinTrackedFeaturesConfig.setStreamName("trackedFeaturesConfig")

27

28# Properties

29monoLeft.setResolution(dai.MonoCameraProperties.SensorResolution.THE_400_P)

30monoLeft.setCamera("left")

31monoRight.setResolution(dai.MonoCameraProperties.SensorResolution.THE_400_P)

32monoRight.setCamera("right")

33

34# Disable optical flow

35featureTrackerLeft.initialConfig.setMotionEstimator(False)

36featureTrackerRight.initialConfig.setMotionEstimator(False)

37

38# Linking

39monoLeft.out.link(featureTrackerLeft.inputImage)

40featureTrackerLeft.passthroughInputImage.link(xoutPassthroughFrameLeft.input)

41featureTrackerLeft.outputFeatures.link(xoutTrackedFeaturesLeft.input)

42xinTrackedFeaturesConfig.out.link(featureTrackerLeft.inputConfig)

43

44monoRight.out.link(featureTrackerRight.inputImage)

45featureTrackerRight.passthroughInputImage.link(xoutPassthroughFrameRight.input)

46featureTrackerRight.outputFeatures.link(xoutTrackedFeaturesRight.input)

47xinTrackedFeaturesConfig.out.link(featureTrackerRight.inputConfig)

48

49featureTrackerConfig = featureTrackerRight.initialConfig.get()

50

51print("Press 's' to switch between Harris and Shi-Thomasi corner detector!")

52

53# Connect to device and start pipeline

54with dai.Device(pipeline) as device:

55

56 # Output queues used to receive the results

57 passthroughImageLeftQueue = device.getOutputQueue("passthroughFrameLeft", 8, False)

58 outputFeaturesLeftQueue = device.getOutputQueue("trackedFeaturesLeft", 8, False)

59 passthroughImageRightQueue = device.getOutputQueue("passthroughFrameRight", 8, False)

60 outputFeaturesRightQueue = device.getOutputQueue("trackedFeaturesRight", 8, False)

61

62 inputFeatureTrackerConfigQueue = device.getInputQueue("trackedFeaturesConfig")

63

64 leftWindowName = "left"

65 rightWindowName = "right"

66

67 def drawFeatures(frame, features):

68 pointColor = (0, 0, 255)

69 circleRadius = 2

70 for feature in features:

71 cv2.circle(frame, (int(feature.position.x), int(feature.position.y)), circleRadius, pointColor, -1, cv2.LINE_AA, 0)

72

73 while True:

74 inPassthroughFrameLeft = passthroughImageLeftQueue.get()

75 passthroughFrameLeft = inPassthroughFrameLeft.getFrame()

76 leftFrame = cv2.cvtColor(passthroughFrameLeft, cv2.COLOR_GRAY2BGR)

77

78 inPassthroughFrameRight = passthroughImageRightQueue.get()

79 passthroughFrameRight = inPassthroughFrameRight.getFrame()

80 rightFrame = cv2.cvtColor(passthroughFrameRight, cv2.COLOR_GRAY2BGR)

81

82 trackedFeaturesLeft = outputFeaturesLeftQueue.get().trackedFeatures

83 drawFeatures(leftFrame, trackedFeaturesLeft)

84

85 trackedFeaturesRight = outputFeaturesRightQueue.get().trackedFeatures

86 drawFeatures(rightFrame, trackedFeaturesRight)

87

88 # Show the frame

89 cv2.imshow(leftWindowName, leftFrame)

90 cv2.imshow(rightWindowName, rightFrame)

91

92 key = cv2.waitKey(1)

93 if key == ord('q'):

94 break

95 elif key == ord('s'):

96 if featureTrackerConfig.cornerDetector.type == dai.FeatureTrackerConfig.CornerDetector.Type.HARRIS:

97 featureTrackerConfig.cornerDetector.type = dai.FeatureTrackerConfig.CornerDetector.Type.SHI_THOMASI

98 print("Switching to Shi-Thomasi")

99 else:

100 featureTrackerConfig.cornerDetector.type = dai.FeatureTrackerConfig.CornerDetector.Type.HARRIS

101 print("Switching to Harris")

102

103 cfg = dai.FeatureTrackerConfig()

104 cfg.set(featureTrackerConfig)

105 inputFeatureTrackerConfigQueue.send(cfg)Pipeline

Need assistance?

Head over to Discussion Forum for technical support or any other questions you might have.