Warp Mesh

Warp Mesh

Setup

Command Line

1git clone https://github.com/luxonis/depthai-python.git

2cd depthai-python/examples

3python3 install_requirements.pyDemo

Source code

Python

PythonGitHub

1#!/usr/bin/env python3

2import cv2

3import depthai as dai

4import numpy as np

5

6# Create pipeline

7pipeline = dai.Pipeline()

8

9camRgb = pipeline.create(dai.node.ColorCamera)

10camRgb.setPreviewSize(496, 496)

11camRgb.setInterleaved(False)

12maxFrameSize = camRgb.getPreviewWidth() * camRgb.getPreviewHeight() * 3

13

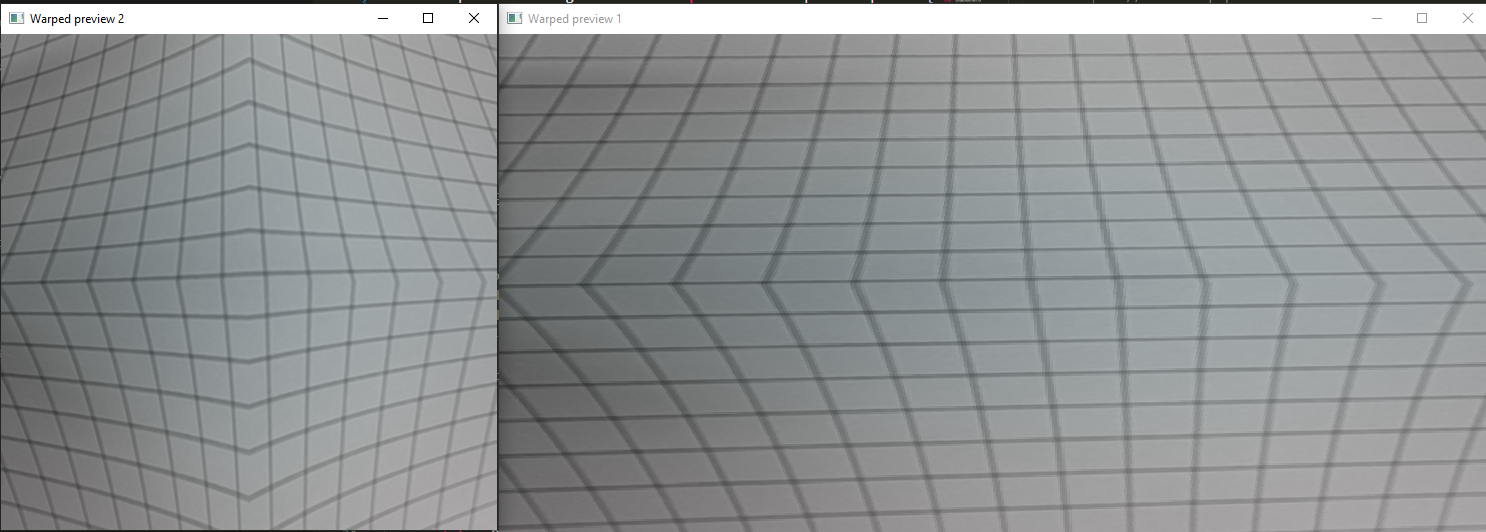

14# Warp preview frame 1

15warp1 = pipeline.create(dai.node.Warp)

16# Create a custom warp mesh

17tl = dai.Point2f(20, 20)

18tr = dai.Point2f(460, 20)

19ml = dai.Point2f(100, 250)

20mr = dai.Point2f(400, 250)

21bl = dai.Point2f(20, 460)

22br = dai.Point2f(460, 460)

23warp1.setWarpMesh([tl,tr,ml,mr,bl,br], 2, 3)

24WARP1_OUTPUT_FRAME_SIZE = (992,500)

25warp1.setOutputSize(WARP1_OUTPUT_FRAME_SIZE)

26warp1.setMaxOutputFrameSize(WARP1_OUTPUT_FRAME_SIZE[0] * WARP1_OUTPUT_FRAME_SIZE[1] * 3)

27warp1.setHwIds([1])

28warp1.setInterpolation(dai.Interpolation.NEAREST_NEIGHBOR)

29

30camRgb.preview.link(warp1.inputImage)

31xout1 = pipeline.create(dai.node.XLinkOut)

32xout1.setStreamName('out1')

33warp1.out.link(xout1.input)

34

35# Warp preview frame 2

36warp2 = pipeline.create(dai.node.Warp)

37# Create a custom warp mesh

38mesh2 = [

39 (20, 20), (250, 100), (460, 20),

40 (100, 250), (250, 250), (400, 250),

41 (20, 480), (250, 400), (460,480)

42]

43warp2.setWarpMesh(mesh2, 3, 3)

44warp2.setMaxOutputFrameSize(maxFrameSize)

45warp1.setHwIds([2])

46warp2.setInterpolation(dai.Interpolation.BICUBIC)

47

48camRgb.preview.link(warp2.inputImage)

49xout2 = pipeline.create(dai.node.XLinkOut)

50xout2.setStreamName('out2')

51warp2.out.link(xout2.input)

52

53# Connect to device and start pipeline

54with dai.Device(pipeline) as device:

55 # Output queue will be used to get the rgb frames from the output defined above

56 q1 = device.getOutputQueue(name="out1", maxSize=8, blocking=False)

57 q2 = device.getOutputQueue(name="out2", maxSize=8, blocking=False)

58

59 while True:

60 in1 = q1.get()

61 if in1 is not None:

62 cv2.imshow("Warped preview 1", in1.getCvFrame())

63 in2 = q2.get()

64 if in2 is not None:

65 cv2.imshow("Warped preview 2", in2.getCvFrame())

66

67 if cv2.waitKey(1) == ord('q'):

68 breakPipeline

Need assistance?

Head over to Discussion Forum for technical support or any other questions you might have.